By Aaryan Mehta (2024) and Moksh Ahuja (2024)

The rise of NVIDIA from the Spring of 2023 has truly been sensational. Despite the stock price rise beginning from the onset of 2023, its growth had begun much earlier. But before diving into that, let’s look at what NVIDIA does.

NVIDIA is the largest GPU (Graphic Processing Unit) producer in the world. GPUs, in essence, are a highly specialized and powerful version of your CPUs. A GPU is much faster and effective as it breaks down simple and repeatable tasks into chunks and performs them in parallel, and this is essentially what Parallel Computing is. The largest and by far the most interesting use of GPU is in, well, Artificial Intelligence (yes, the buzzword you see everywhere). The boom of Artificial Intelligence has been meteoric. Every major company seems to want to do something with it, and at this point, it feels like they’ll manage to add AI to cleaning your own room (which I hope they do because I am tired of doing it). Anyway, NVIDIA also produces a lot of hardware for AI and Machine Learning. These NVIDIA components are dynamic and can perform complex calculations, render graphics, and perform pattern recognition and matching, all of which are crucial for AI and ML models. These chips are produced by NVIDIA, and the software they produce that accompanies them is now widely used by industry giants themselves for multiple applications. Let us look at how Nvidia built its empire over the years.

In April 1993, three computer scientists, Jensen Huang, Chris Malachowsky, and Curtis Priem (the latter two who were just going to become the next Michael Collins) left their high-paying jobs to found NVIDIA (yes, just like every startup ever). Their initial focus was graphic-based computing and video games, for which they raised 20 million USD. In 1999, they went public and released their GeForce 256, for which Xbox awarded them a contract for their gaming console.

In 2006, Stanford University researchers found that GPUs could handle much more than graphics; they could do math faster than a 14-year-old Chinese kid in the International Math Olympiads (basically astronomically fast). Jensen Huang, at that time, made the call to invest the time and money of NVIDIA into making the GPUs programmable, a move that was to revolutionize AI. Soon, every Artificial Intelligence research operation and firm started using NVIDIA chips for their purposes. A major milestone in 2012 was AlexNet, an AI that could classify images, and this was built on NVIDIA chips. Its Tegra processor started being used in devices, and the GeForce series GPUs they were releasing were a big hit in gaming, mobile devices, and, more importantly, automotive vehicles. By 2014, Honda, Volkswagen, BMW, Audi, Lamborghini, and Tesla were using NVIDIA Processors and Chips in their mass-produced cars.

Up until now, despite all these giant strides in AI and GPUs, NVIDIA’s stock prices remained relatively flat and unimpressive. However, this was to change in 2015. There was a strong growth in all of NVIDIA’s partner companies, particularly in automotive and gaming. The stock surged 224%. It entered into partnerships with multiple car companies to develop self-driving cars. Their DRIVE kit was going to be a big hit, as the AI could process large amounts of sensor data and make real-time decisions, which was important for these autonomous vehicles. For this, they were named Yahoo Finance’s Company of the Year. 2018 now seemed to be the tipping point for NVIDIA. The point where they entered their fantasies. Their stock price, revenue, sales, everything was surging. It had officially become the leader in the AI chip market, and there was no one to compete with them. The demand for GPUs skyrocketed, and NVIDIA seemed to be having the time of their lives.

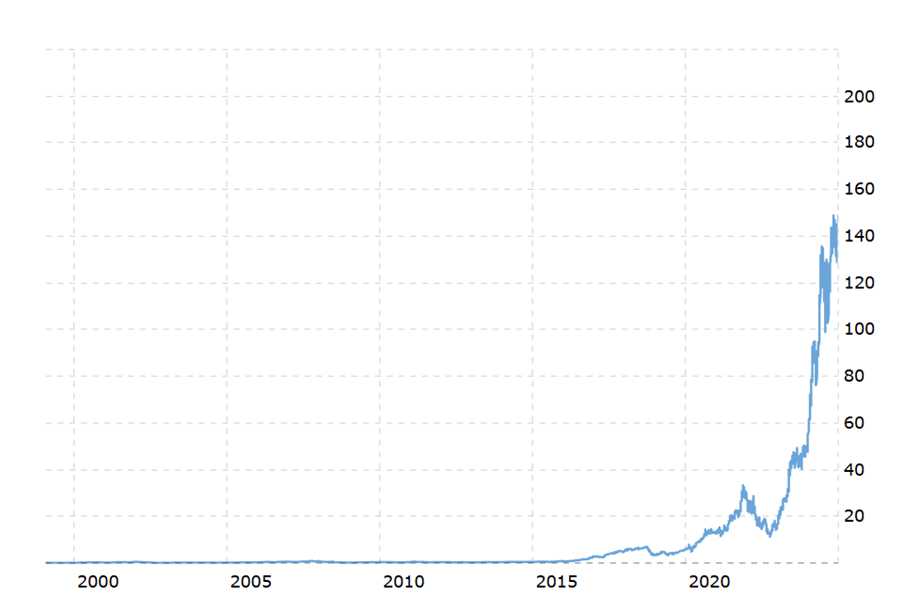

NVIDIA kept releasing and developing new chips like RTX 3060, 3070, 3080, 4070, and its software was being used globally, like Omniverse, Tigra, etc. In 2023, NVIDIA had its biggest break yet. Its stock increased 239%. NVIDIA Employees were just retiring in their 30s or 40s because they now had millions in stocks. They seemed to be having champagne problems. By 2024, NVIDIA’s Market value will increase by 2 trillion USD, and its stock will increase even more, and didn’t seem to fall at all. To put this into perspective, here’s their stock chart over the years. Their growth was incredible and still seems too good to be true (yes, foreshadowing)

NVIDIA overtook market giants, like Microsoft, Alphabet (Google), and Amazon, and became the second most valued company in the world, only after Apple. It became the biggest global gainer in Market Cap. Its Blackwell GPU was named product of the year. This seemed like a fairy tale story for NVIDIA. However, in stories like these, one often wonders, is NVIDIA really the do-good protagonist who can do nothing wrong? Is the story really that crystal clear and beautiful?

There’s obviously more than what meets the eye. On September 3, NVIDIA faced a shocking $279 billion market cap loss in a single trading day. The treasured stock plummeted by 9.5%. This marked the biggest single-day value loss for a U.S company in history.

Let’s dive into economics.

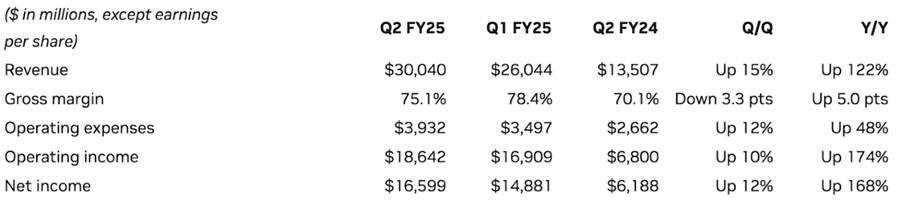

By the looks of it, NVIDIA’s numbers look spotless with a 122% year-on-year increase in revenue and 75.1% gross margin. The magic lies in their data centers, which are essential for training AI models of several companies, including Alphabet and Apple. The recently introduced Gemini on your Android phone or the iPhone 16 “built ground up for Apple Intelligence” largely depends on NVIDIA’s data center, which accounts for 87% of their revenue. Yeah, the GPU manufacturing giant’s actual business lies in AI. But the devil lies in the details. 45% of their data center revenue comes from their top 5 customers who are more than willing to work on their own data centers to keep their reliance on NVIDIA in check. The problem is not new. Last year that number was 40%, which means that conditions have, in fact, worsened. After the release of their numbers, the stock was down by 8.5%, which seems counterintuitive because they reported 30 billion in revenue, whereas the projected value was only 28 billion. The downward trend can be attributed to the delay in the rollout of their next-generation Blackwell chips. In NVIDIA’s defense, they clarified that the delay was due to a change in their Blackwell GPU mask, which was expected to improve their production yield.

While all this talk about numbers may provide some indication of the future, it’s the fundamentals that make or break a company. So, don’t worry if economics is not really up your alley, let’s deep into some of the more fundamental reasons for the potential downfall of NVIDIA’s stock price. The sentiment among the experts is that it is the market’s FOMO that is driving NVIDIA’s stock price rather than its actual value. Although we have many examples from the past that prove their case but is this time different? Let’s find out.

Hype: Virtually all AI today depends on NVIDIA in some way or the other. Hence, it is not wrong to say that the future of AI directly correlates with NVIDIA’s anticipated market movements. Many experts directly compare today’s AI bubble to the dot-com bubble in the 1990s. It is not wrong to say that the current situation is a spiritual sequel of the conditions in the 90s. When the dot-com bubble burst, most of the companies whose stocks were hyped up due to the World Wide Web simply went bankrupt. Experts believe that the same is bound to happen to many of the companies in the future when the AI bubble bursts. Amazon is one of the largest companies that survived the dot-com bubble, and it is quite predictable that NVIDIA will be the Amazon of the AI bubble. Amazon suffered a staggering drop of 92% in its stock value at the time of the crash. It would not be until 2009 that Amazon would hit its old peak. Hence, tough times lie ahead for NVIDIA. So, this was the hype aspect of the stock. But what’s the actual value addition by NVIDIA stock today?

Market Maturity: If we again go back to the 90s, we find that almost all the “internet” companies were hyped based on the FOMO, but no one was actually generating any revenue. So, it took quite a while to build a business model that would actually work on the internet. To a neutral reader, it may sound familiar with where we are standing with AI today. No matter how impressive Apple’s Math Notes or Bixby’s generative AI seems, people are still sceptical about these companies’ value proposition for the future. The general belief is that they have not figured it out yet. Right now, companies like Meta, Apple, Google, or even some of the startups that NVIDIA is funding itself are simply pouring money into the technology without an actual ROI. Demand for NVIDIA’s chips or data centers is constrained by the fact that someone should actually generate revenue out of AI, which does not seem to be the case as of now.

The bottom line is that the underlying economics of the end market for GPU chips and broader AI ecosystems are weak, and most of NVIDIA’s customers remain loss-making. Large language models are very expensive to build and train. VC firm Sequoia estimated that the AI industry spent $50 billion on NVIDIA chips last year, and the total could even exceed $100 billion in the future. In return, these investments have only generated about $3 billion in revenue.

Another point of concern is the nature of the industry. Large-scale AI implementation for a company works in two ways: –

- The compute-intensive training phase, which drives NVIDIA’s current demand

- The deployment phase, where these trained models are used in real-world applications with low power requirements

That’s where the competition comes in.

Competition: NVIDIA’s flagship chip costs roughly $30,000 or more, giving customers plenty of incentives to seek alternatives. “NVIDIA would love to have 100% of it, but customers would not love for NVIDIA to have 100% of it,” said Sid Sheth, co-founder of aspiring rival D-Matrix. “It’s just too big an opportunity. It would be too unhealthy if any one company took all of it.” As the industry moves to the deployment phase, the demand for less expensive chips will increase, and old rivals like Intel and AMD are ready to have a stake in the game. AMD CEO Lisa Su wants investors to believe there’s plenty of room for many successful companies in the space. “The key is that there are a lot of options there,” Su told reporters in December, when her company launched its most recent AI chip. “I think we’re going to see a situation where there’s not only one solution, there will be multiple solutions.”

Last week, Microsoft revealed that it was using AMD Instinct GPUs to serve its copilot models. Morgan Stanley analysts took the news as a sign that AMD’s AI chips could surpass $4 billion this year, the company’s public target.

Intel recently announced the third version of its AI accelerator, Gaudi 3. This time Intel compared it directly to the competition, describing it as a more cost-effective alternative and better than NVIDIA’s H100 in terms of running inferences, while faster at training models.

The biggest threat to NVIDIA’s data center business may be a change in where processing happens.

Developers are increasingly betting that AI work will move from server farms to the laptops, PCs, and phones we own.

Big models like the ones developed by OpenAI require massive clusters of powerful GPUs for inference, but companies like Apple and Microsoft are developing “small models” that require less power and data and can run on a battery-powered device. They may not be as skilled as the latest version of ChatGPT, but there are other applications they perform, such as summarizing text or visual search.

Apple and Qualcomm are updating their chips to run AI more efficiently, adding specialized sections for AI models called neural processors, which can have privacy and speed advantages.

Qualcomm recently announced a PC chip that will allow laptops to run Microsoft AI services on the device. The company has also invested in a number of chipmakers making lower-power processors to run AI algorithms outside of a smartphone or laptop.

Here’s another twist in the story: Chinese AI startup DeepSeek recently released a new generative AI model called R1, positioned as a competitor to OpenAI. Analysts have cited DeepSeek’s models as much less costly than OpenAI’s. DeepSeek said recently that it spent just $5.6 million to train another one of its latest models, V3, while OpenAI spent more than $100 million to train its GPT-4 model. Some Wall Street Analysts worried that the cheaper costs DeepSeek claimed to have spent training its AI models, due in part to using fewer AI chips, meant US firms were overspending on Artificial Intelligence Infrastructure.

If it turns out that the actual amount of money required to train AI is much less than the current standard, it could have profound implications across several sectors. The implications of this situation are so far-reaching that they have the potential to completely redefine Nvidia’s market strategy.

With so many unknowns and an infinite number of variables in play, Nvidia is trying to chart a course through uncharted waters. However, the uncertainty surrounding Nvidia’s future sends shockwaves through the entire AI landscape. Here’s how a change in Nvidia’s position might affect AI in general:

Faster Democratization of AI: With reduced reliance on Nvidia’s expensive GPUs, smaller companies might use cheaper hardware or cloud solutions, making AI capabilities more accessible to a wider range of users, from academic researchers to businesses in non-tech sectors.

Increased Competition in AI hardware: If Nvidia faces reduced demand for GPUs, other companies—like AMD, Intel, or even custom chip makers like Google with their TPUs (Tensor Processing Units)—may seize the opportunity to capture market share. This could lead to a more diverse AI hardware ecosystem and more competition, which could drive innovation and lower prices for AI infrastructure overall.

Companies might invest in more specialized hardware, such as domain-specific accelerators or chips optimized for specific AI applications (e.g., edge computing, real-time inference), leading to a more diversified and tailored approach to AI development.

Focus Shifting to AI ethics and Governance: If AI becomes more accessible and less costly to train, the rate at which AI is deployed in sensitive areas like criminal justice, hiring, and healthcare could increase. This means a heightened focus on AI ethics and governance would be essential to ensure AI is used responsibly and doesn’t exacerbate societal inequalities.

As AI adoption becomes more widespread, policymakers may start to push for more robust AI regulations. The push for affordable AI could lead to more oversight on safety, fairness, and accountability in AI models. This would encourage a greater focus on ethical AI development across industries.

If the cost to train AI is significantly reduced, the impact on NVIDIA could ripple across the entire AI ecosystem, creating more competition in hardware, faster innovation in AI applications, and a more democratized and accessible AI landscape. While NVIDIA might face challenges, especially in hardware demand, the broader impact on AI would likely be positive in terms of accessibility, speed of development, and real-world applications across industries.

As the cliché quote goes, every coin has two sides, and so does NVIDIA. Who is to say what the future holds for NVIDIA? The past is clearly not black and white. We do not know what the future holds for them, and whether the meteoric rise of NVIDIA will continue or will it have an Enron-like downfall due to their practices. But what we know for sure is that despite whatever darkens NVIDIA’s name, it is still going strong right now.

Leave a comment